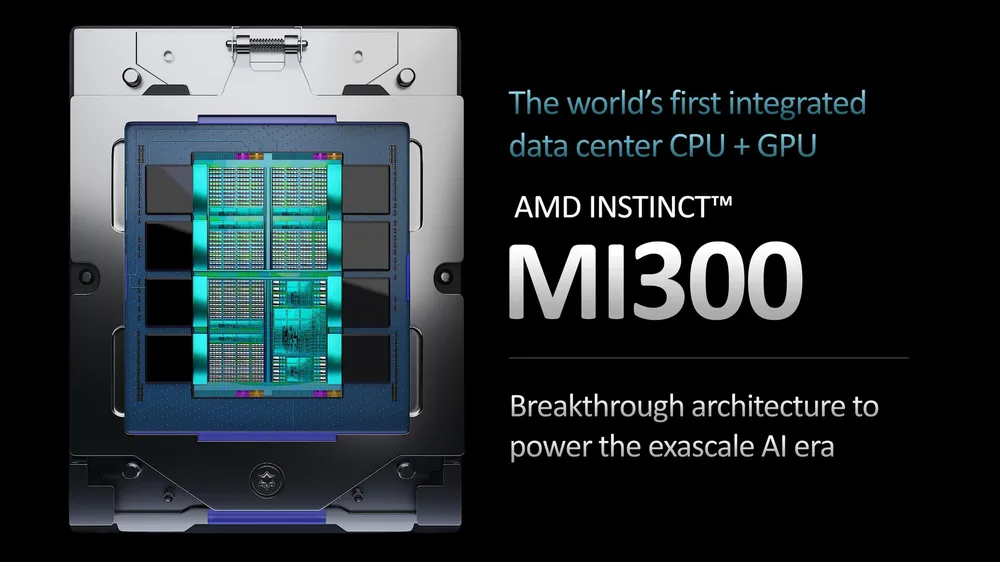

AMD Instinct MI300

Introduction to AMD Instinct MI300

The AMD Instinct MI300 series is a family of high-performance accelerators designed to deliver leadership performance for generative AI workloads and HPC applications. The MI300 series is built on the 5 nm process and features the Aqua Vanjaram graphics processor. The series includes two models: the MI300A and MI300X, each with its own unique architecture and design.Architecture and Performance

The AMD Instinct MI300 series features a advanced architecture designed to deliver high-performance and efficiency. The MI300X GPU is designed for AI inference workloads and delivers up to 1.6X more performance than the Nvidia H100. The MI300A APU provides a balanced architecture for a wide range of workloads, including AI training and HPC applications. The MI300 series also features advanced memory and storage capabilities, including high-bandwidth memory and NVMe storage.Technical Specifications

Benchmarks and Performance Results

The AMD Instinct MI300 series has been benchmarked in a variety of workloads, including AI inference and training, as well as HPC applications. The results show that the MI300 series delivers leadership performance and efficiency, making it an ideal choice for a wide range of use cases.

What is the process node used in the AMD Instinct MI300 series?

The AMD Instinct MI300 series is built on the 5 nm process node.

What is the graphics processor used in the MI300 series?The MI300 series features the Aqua Vanjaram graphics processor.

What is the memory and storage configuration of the MI300 series?The MI300 series features high-bandwidth memory and NVMe storage.

What are the power consumption and form factor of the MI300 series?The power consumption of the MI300 series is up to 250W for the MI300A and up to 300W for the MI300X. The form factor is full-height, full-length.

Specifications

| Specification | MI300A | MI300X |

|---|---|---|

| Process Node | 5 nm | 5 nm |

| Graphics Processor | Aqua Vanjaram | Aqua Vanjaram |

| Memory | High-Bandwidth Memory | High-Bandwidth Memory |

| Storage | NVMe | NVMe |

| AI Inference Performance | Up to 1.2X Nvidia H100 | Up to 1.6X Nvidia H100 |

| AI Training Performance | Similar to Nvidia H100 | Similar to Nvidia H100 |

| Power Consumption | Up to 250W | Up to 300W |

| Form Factor | Full-Height, Full-Length | Full-Height, Full-Length |